Entropy is used by analysts and market technicians to describe the level of error that can be.

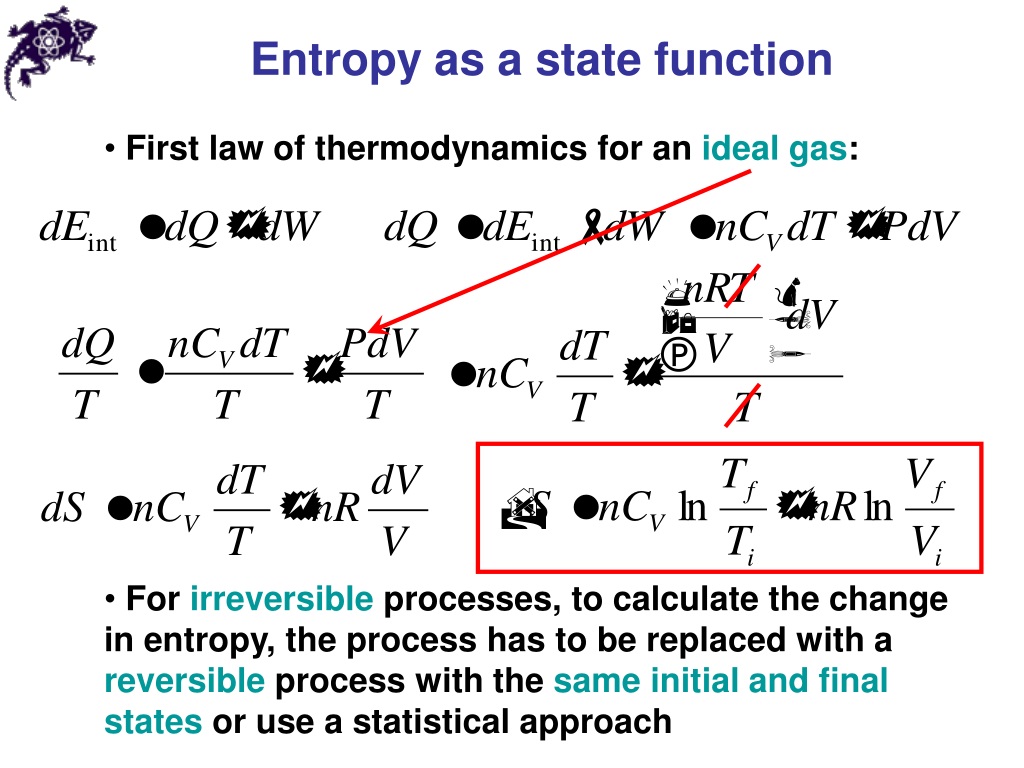

C in Eq.2.3 is an undetermined constant which is unimportant since we are always interested in changes in entropy, so it is convenient to set C 0. Entropy refers to the degree of randomness or uncertainty pertaining to a market or security. It allows the user to explore how as the size of the system increases, the probability for the most likely energy configuration increases relative to other less likely configurations. The use here of the notation S instead of S indicates that Boltzmann’s expression is valid for any size change of entropy. Thomas Moore has developed a web application that models our two Einstein solid system. This is yet another piece of evidence that our universe is governed by quantum, rather than classical physics. Our analysis works because there is a finite number of microstates for each energy configuration. There are several different definitions, entropy is first of all a measure of disorder, it’s a measure of how many ways you can reorganize a given system. If each bond of our Einstein solid could have any value of energy (such as 1.25 or 345.8461 quanta), then there would be an infinite number of microstates for any energy configuration. Although all forms of energy can be used to do work, it is not possible to use the entire available energy for work. The more disordered a system and higher the entropy, the less of a systems energy is available to do work. Entropy also describes how much energy is not available to do work. The fact that energy comes in an integer multiple of quanta makes the number of microstates countable. Entropy is a measure of the disorder of a system. To see this in more detail, consider reading this paper and performing some of the interactive demonstrations therein.Įssential to the Boltzmann definition of entropy is that energy is quantized. Entropy is a thermodynamic quantity that is generally used to describe the course of a process, that is, whether it is a spontaneous process and has a probability of occurring in a defined direction, or a non-spontaneous process and will not proceed in the defined direction, but in the reverse direction.

It helps to think about entropy as a measure of the spread of energy. While this is the formal definition, it doesn’t provide us with much physical intuition.

So, if entropy is not disorder, what is it? The formal definition offered by Ludwig Boltzmann (and later written on his tombstone) is S= kBlnW, where S is the entropy of the system in a particular energy configuration, kB= 1.380×10−23J/K (Boltzmann's constant) and lnW is the natural logarithm of number of microstates for that energy configuration, or macrostate. Frank Lambert has written many articles on interpreting the meaning of entropy. Perhaps more importantly, disorder is usually taken to be a comment on the arrangement of a single microstate, yet entropy is defined in terms of all of the microstates for an energy configuration. Despite advances in batteries, certain applications call for alternative energy storage technologies with very fast charge/discharge capabilities. Disorder is a subjective description that has no rigorous definition.Ģ. Hopefully, this video can shed some light on the 'disorder' we find ourselves in. p 2) = I( p 1) + I( p 2): the information learned from independent events is the sum of the information learned from each event.So why is “disorder” a misleading interpretation of entropy? There are two main problems:ġ. Entropy is a very weird and misunderstood quantity.I(1) = 0: events that always occur do not communicate information.I( p) is monotonically decreasing in p: an increase in the probability of an event decreases the information from an observed event, and vice versa.The amount of information acquired due to the observation of event i follows from Shannon's solution of the fundamental properties of information: To understand the meaning of −Σ p i log( p i), first define an information function I in terms of an event i with probability p i. This ratio is called metric entropy and is a measure of the randomness of the information. : 14–15Įntropy can be normalized by dividing it by information length. The entropy is zero: each toss of the coin delivers no new information as the outcome of each coin toss is always certain. The extreme case is that of a double-headed coin that never comes up tails, or a double-tailed coin that never results in a head. Entropy, then, can only decrease from the value associated with uniform probability. Uniform probability yields maximum uncertainty and therefore maximum entropy. H ( X ) := − ∑ x ∈ X p ( x ) log p ( x ) = E,

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed